Show images of current Projects | Years: 2019 - 2020 - 2021.

VRVis Competence Center

The VRVis K1 Research Center is the leading application oriented research center in the area of virtual reality (VR) and visualization (Vis) in Austria and is internationally recognized. You can find extensive Information about the VRVis-Center here

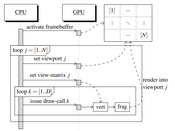

Superhumans - Walking Through Walls

In recent years, virtual and augmented reality have gained widespread attention because of newly developed head-mounted displays. For the first time, mass-market penetration seems plausible. Also, range sensors are on the verge of being integrated into smartphones, evidenced by prototypes such as the Google Tango device, making ubiquitous on-line acquisition of 3D data a possibility. The combination of these two technologies – displays and sensors – promises applications where users can directly be immersed into an experience of 3D data that was just captured live. However, the captured data needs to be processed and structured before being displayed. For example, sensor noise needs to be removed, normals need to be estimated for local surface reconstruction, etc. The challenge is that these operations involve a large amount of data, and in order to ensure a lag-free user experience, they need to be performed in real time, i.e., in just a few milliseconds per frame. In this proposal, we exploit the fact that dynamic point clouds captured in real time are often only relevant for display and interaction in the current frame and inside the current view frustum. In particular, we propose a new view-dependent data structure that permits efficient connectivity creation and traversal of unstructured data, which will speed up surface recovery, e.g. for collision detection. Classifying occlusions comes at no extra cost, which will allow quick access to occluded layers in the current view. This enables new methods to explore and manipulate dynamic 3D scenes, overcoming interaction methods that rely on physics-based metaphors like walking or flying, lifting interaction with 3D environments to a “superhuman” level.

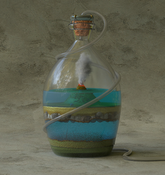

Smart Communities and Technologies: 3D Spatialization

The Research Cluster "Smart Communities and Technologies" (Smart CT) at TU Wien will provide the scientific underpinnings for next-generation complex smart city and communities infrastructures. Cities are ever-evolving, complex cyber physical systems of systems covering a magnitude of different areas. The initial concept of smart cities and communities started with cities utilizing communication technologies to deliver services to their citizens and evolved to using information technology to be smarter and more efficient about the utilization of their resources. In recent years however, information technology has changed significantly, and with it the resources and areas addressable by a smart city have broadened considerably. They now cover areas like smart buildings, smart products and production, smart traffic systems and roads, autonomous driving, smart grids for managing energy hubs and electric car utilization or urban environmental systems research.

3D spatialization creates the link between the internet of cities infrastructure and the actual 3D world in which a city is embedded in order to perform advanced computation and visualization tasks. Sensors, actuators and users are embedded in a complex 3D environment that is constantly changing. Acquiring, modeling and visualizing this dynamic 3D environment are the challenges we need to face using methods from Visual Computing and Computer Graphics. 3D Spatialization aims to make a city aware of its 3D environment, allowing it to perform spatial reasoning to solve problems like visibility, accessibility, lighting, and energy efficiency.

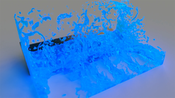

Computational Design of Geometric Materials

In this project we want to research novel materials whose mechanical behavior is described by the complexity of their geometry. Such “geometric materials” are cellular structures whose properties depend on the shape and the connectivity of their cells, while the actual physical substance they are built of is constant across the entire object.Real-Time Shape Acquisition with Sensor-Specific Precision

Acquiring shapes of physical objects in real time and with guaranteed precision to the noise model of the sensor devices.Path-Space Manifolds for Noise-Free Light Transport

The project aims to develop new statistical and algorithmic methods to improve light-transport simulation for offline rendering.MAKE-IT-FAB: Modeling of Shapes for Personal Fabrication

The aim of this project is to investigate and to contribute to shape modeling and geometry processing for personal fabrication---a trend that currently receives intensified attention in the science and industry. Our goal is to contribute novel algorithmic solutions for fabrication-aware shape processing and interactive modeling.ILLUSTRARE: Integrative Visual Abstraction of Molecular Data

FWF - I 2953-N31Integrative Visual Abstraction of Molecular Data

BioNetIllustration: User Centric Illustrations of Biological Networks

In living systems, one molecule is commonly involved in several distinct physiological functions. The roles of molecules are commonly summarized in pathway diagrams, which, however, are abstract, hierarchically nested and thus is difficult to comprehend especially by non-expert audience. The primary goal of this research in visualization is to intuitively support the comprehensive understanding of relationships among biological networks using interactively computed illustrations. Illustrations, especially in textbooks of biology are carefully designed to clearly present reactions between organs as well as interactions within cells. Automatic generation of illustrative visualizations of biological networks is thus the technical content of this proposal. Automatic generation of hand-drawn illustrations has been a challenging task due to the difficulty of algorithmically describing a human creative process such as evaluating and selecting significant information and composing meaningful explanations in a visually plausible manner. The project also involves experts from several disciplines including network and medical visualization, data mining, systems biology as well as perceptual psychology. The result will provide a new direction for physiological process analysis and accelerate the knowledge transfer not only within experts but also to the public. Acknowledgment: The project has received funding from the European Union Horizon 2020 research and innovation programme under the Marie Sklodowska-Curie grant agreement No. 747985.