Superhumans - Walking Through Walls

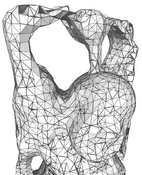

In recent years, virtual and augmented reality have gained widespread attention because of newly developed head-mounted displays. For the first time, mass-market penetration seems plausible. Also, range sensors are on the verge of being integrated into smartphones, evidenced by prototypes such as the Google Tango device, making ubiquitous on-line acquisition of 3D data a possibility. The combination of these two technologies – displays and sensors – promises applications where users can directly be immersed into an experience of 3D data that was just captured live. However, the captured data needs to be processed and structured before being displayed. For example, sensor noise needs to be removed, normals need to be estimated for local surface reconstruction, etc. The challenge is that these operations involve a large amount of data, and in order to ensure a lag-free user experience, they need to be performed in real time, i.e., in just a few milliseconds per frame. In this proposal, we exploit the fact that dynamic point clouds captured in real time are often only relevant for display and interaction in the current frame and inside the current view frustum. In particular, we propose a new view-dependent data structure that permits efficient connectivity creation and traversal of unstructured data, which will speed up surface recovery, e.g. for collision detection. Classifying occlusions comes at no extra cost, which will allow quick access to occluded layers in the current view. This enables new methods to explore and manipulate dynamic 3D scenes, overcoming interaction methods that rely on physics-based metaphors like walking or flying, lifting interaction with 3D environments to a “superhuman” level.