Information

- Publication Type: Journal Paper (without talk)

- Workgroup(s)/Project(s):

- Date: November 2012

- ISSN: 0097-8493

- Journal: Computers & Graphics

- Number: 7

- Volume: 36

- Pages: 846 – 856

- Keywords: Differential rendering, Reconstruction, Instant radiosity, Microsoft Kinect, Real-time global illumination, Mixed reality

Abstract

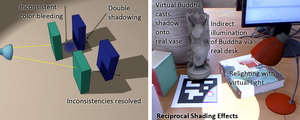

In this paper we present a novel plausible rendering method for mixed reality systems, which is useful for many real-life application scenarios, like architecture, product visualization or edutainment. To allow virtual objects to seamlessly blend into the real environment, the real lighting conditions and the mutual illumination effects between real and virtual objects must be considered, while maintaining interactive frame rates. The most important such effects are indirect illumination and shadows cast between real and virtual objects.Our approach combines Instant Radiosity and Differential Rendering. In contrast to some previous solutions, we only need to render the scene once in order to find the mutual effects of virtual and real scenes. In addition, we avoid artifacts like double shadows or inconsistent color bleeding which appear in previous work. The dynamic real illumination is derived from the image stream of a fish-eye lens camera. The scene gets illuminated by virtual point lights, which use imperfect shadow maps to calculate visibility. A sufficiently fast scene reconstruction is done at run-time with Microsoft's Kinect sensor. Thus a time-consuming manual pre-modeling step of the real scene is not necessary. Our results show that the presented method highly improves the illusion in mixed-reality applications and significantly diminishes the artificial look of virtual objects superimposed onto real scenes.

Additional Files and Images

Weblinks

BibTeX

@article{knecht_martin_2012_RSMR,

title = "Reciprocal Shading for Mixed Reality",

author = "Martin Knecht and Christoph Traxler and Oliver Mattausch and

Michael Wimmer",

year = "2012",

abstract = "In this paper we present a novel plausible rendering method

for mixed reality systems, which is useful for many

real-life application scenarios, like architecture, product

visualization or edutainment. To allow virtual objects to

seamlessly blend into the real environment, the real

lighting conditions and the mutual illumination effects

between real and virtual objects must be considered, while

maintaining interactive frame rates. The most important such

effects are indirect illumination and shadows cast between

real and virtual objects. Our approach combines Instant

Radiosity and Differential Rendering. In contrast to some

previous solutions, we only need to render the scene once in

order to find the mutual effects of virtual and real scenes.

In addition, we avoid artifacts like double shadows or

inconsistent color bleeding which appear in previous work.

The dynamic real illumination is derived from the image

stream of a fish-eye lens camera. The scene gets illuminated

by virtual point lights, which use imperfect shadow maps to

calculate visibility. A sufficiently fast scene

reconstruction is done at run-time with Microsoft's Kinect

sensor. Thus a time-consuming manual pre-modeling step of

the real scene is not necessary. Our results show that the

presented method highly improves the illusion in

mixed-reality applications and significantly diminishes the

artificial look of virtual objects superimposed onto real

scenes.",

month = nov,

issn = "0097-8493",

journal = "Computers & Graphics",

number = "7",

volume = "36",

pages = "846--856",

keywords = "Differential rendering, Reconstruction, Instant radiosity,

Microsoft Kinect, Real-time global illumination, Mixed

reality",

URL = "https://www.cg.tuwien.ac.at/research/publications/2012/knecht_martin_2012_RSMR/",

}

draft

draft