Abstract

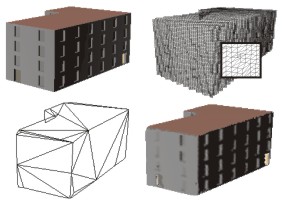

This paper presents a new approach to generate textured depth meshes (TDMs), an impostor-based scene representation that can be used to accelerate the rendering of static polygonal models. The TDMs are precalculated for a fixed viewing region (view cell). The approach relies on a layered rendering of the scene to produce a voxel-based representation. Secondary, a highly complex polygon mesh is constructed that covers all the voxels. Afterwards, this mesh is simplified using a special error metric to ensure that all voxels stay covered. Finally, the remaining polygons are resampled using the voxel representation to obtain their textures. The contribution of our approach is manifold: first, it can handle polygonal models without any knowledge about their structure. Second, only scene parts that may become visible from within the view cell are represented, thereby cutting down on impostor complexity and storage costs. Third, an error metric guarantees that the impostors are practically indistinguishable compared to the original model (i.e. no rubber-sheet effects or holes appear as in most previous approaches). Furthermore, current graphics hardware is exploited for the construction and use of the impostors.More Info

Funding: Austrian Science Fund; under contract no. P-13867-INFContact: Stefan Jeschke, Michael Wimmer

Keywords: virtual environments, walkthroughs, image-based rendering, , impostors, textured depth meshes