Description

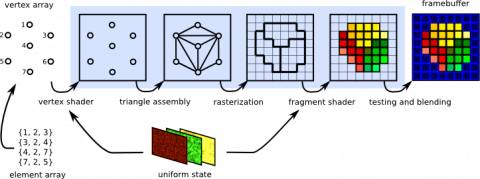

The hardware rasterization pipeline is now fairly complex. From original vertices to output pixels, triangles must be transformed by various matrices, are clipped, projected into screenspace, quantized and rasterized. All of these operations naturally come with a certain computational error, since floating point operations do not have infinite precision.

In order to make a prediction for, e.g., whether or not a triangle will be backface culled, all these errors must be taken into account. If we have a detailed knowledge of how much error each step introduces, we can account for this and, for instance, perform robust and optimal backface culling in software. Ideally, we can find a fast-to-compute upper bound for the error and cull as much geometry as possible early on to increase the performance of real-time rendering.

Tasks

Your job will be to acquire a thorough understanding of all the individual steps that a triangle goes through in the modern rasterization pipeline. You will also have to find out the specifications for minimum and maximum error that each step can introduce. You should come up with an upper bound for various operations that can be easily computed. This should be applied in a real-time application to robustly decide ahead of time whether specific triangles will be culled or not.

Requirements

- Knowledge of English language (source code comments and final report should be in English)

- Knowledge of at least one high-performance programming language (e.g. C++)

- Knowledge of at least one common graphics API (Vulkan/DirectX/OpenGL)

- Willingness to dissect the GPU rendering pipeline to find out how it works in detail

- More knowledge is always advantageous

Environment

The project should yield a set of logically derived bounds and methods to compute them for triangle primitives. The effectiveness should be demonstrated in simple 3D applications, showing that the found bounds are in fact guaranteed to be robust.