Description

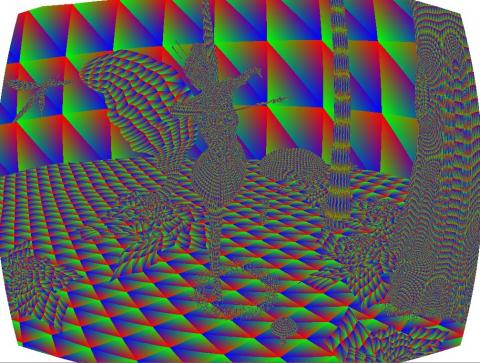

If we render for virtual reality, the requirements are different than for standard displays: due to the radial distortion by the lenses in most head-mounted displays, images must be stretched or squished to counteract this effect with a barrel distortion on the display. This deformation is non-linear, so the distorted image cannot be directly rendered with hardware rasterization. Screen-space effects can operate on rendered images, but they degrade the visual accuracy and require that the original, undistorted images are rendered at higher resolutions.

The graphics group at the TU Graz has designed a software rasterizer that runs on the GPU and is capable of producing images with a barrel distortion directly, thus making better use of each pixel. The code is written in CUDA and its performance is beats other, comparable software techniques. However, currently it is a desktop application and requires a CUDA GPU. Since many virtual reality solutions are powered by smartphones or built-in GPUs without this capability, it would be great to have a version of the non-linear rasterizer that can run on mobile devices.

Tasks

Your job would be to first find a suitable working setup (e.g. pick an available state-of-the-art mobile device at the institute or use your own for development) and to find a graphics API for it that you are comfortable with and that can run compute jobs. The non-linear rasterizer should be ported form its current CUDA form to this mobile API to run on the mobile device. Once finished, you should evalute its performance in comparison to alternative non-linear rendering options both quantitatively and qualitatively. A small VR application should prove that your approach works.

Requirements

- Knowledge of English language (source code comments and final report should be in English)

- Knowledge of CUDA, C++ to understand the original source

- Knowledge of basic VR applications and mobile devices

- Knowledge of at least one compute-capable graphics API that can be applied to a VR-ready mobile device of your choice (Vulkan recommended)

- More knowledge is always advantageous

Environment

The project should be implemented as a standalone application and run on a suitable device, e.g., the built-in GPU of an Oculus Go, or the mobile GPU of a smartphone/tablet that can power other, similar VR solutions (Daydream, GoogleVR...).

Additional Images and Files

| Attachment | Size |

|---|---|

| distortion.png | 57.02 KB |