|

Exploring Dynamical Systems

Collaborative augmented reality

Project duration:1996-1997

Contact: Anton

Fuhrmann |

Description

|

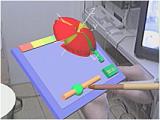

Augmented reality was used for the interactive visualization of complex

dynamical systems. Using this platform, multiple researchers were able

to collaborate on the analysis of phase space representations of dynamical

systems to gain insight into this complex topic.

|

Application

|

Scientific visualization is an important tool for scientists to deal with

very hard problems that often exceed the limits of imagination. Real-time

exploration of three-dimensional mathematical structures using augmented

reality allows to rapidly examine many aspects of the problem and leads

to faster understanding. We have studied dynamical systems, but the same

technology may be used for exploration of any kind of scientific data.

|

Problems

|

We needs to create a system capable of displaying dynamical systems in real

time for multiple users. However, the computation of such data takes a while

which is at odds with the real-time requirements. Furthermore, an integration

with an existing desktop visualization system - AVS - was desired, because many

data sets and visualization tools were already available on this platform.

|

Approach

|

The Studierstube platform was extended to communicate with AVS via a dedicated

network interface. While a tighly coupled real-time display loop was provided

for rapid visual feedback, a separate communication loop between Studierstube

and AVS was used for sending steering commands to AVS and computed geometry

back to Studierstube. This architecture together with simple direct manipulation

facilities of the data lead to a very responsive solution.

|

Publications

|

|